Demo

Overview

Vision-Language-Action (VLA) models achieve over 95% success on standard benchmarks. However, through systematic experiments, we find that current state-of-the-art VLA models largely ignore language instructions. Prior work lacks: (1) systematic semantic perturbation diagnostics, (2) a benchmark that forces language understanding by design, and (3) linguistically diverse training data. This paper constructs the LangGap benchmark, based on a four-dimensional semantic perturbation method – varying instruction semantics while keeping the tabletop layout fixed – revealing language understanding deficits in π0.5. Existing benchmarks like LIBERO assign only one task per layout, underutilizing available objects and target locations; LangGap fully diversifies pick-and-place tasks under identical layouts, forcing models to truly understand language. Experiments show that targeted data augmentation can partially close the language gap – success rate improves from 0% to 90% with single-task training, and 0% to 28% with multi-task training. However, as semantic diversity of extended tasks increases, model learning capacity proves severely insufficient; even trained tasks perform poorly. This reveals a fundamental challenge for VLA models in understanding diverse language instructions – precisely the long-term value of LangGap.

Details

1. Benchmark & Dataset

LangGap benchmark: 99 tasks total — 40 original LIBERO tasks + 59 extended semantic perturbation tasks. We provide a training dataset of 56 tasks: 16 self-collected extended tasks (150 demos each) + 40 original tasks (50 demos each), totaling ~4,100 trajectories.

2. Problem Discovery

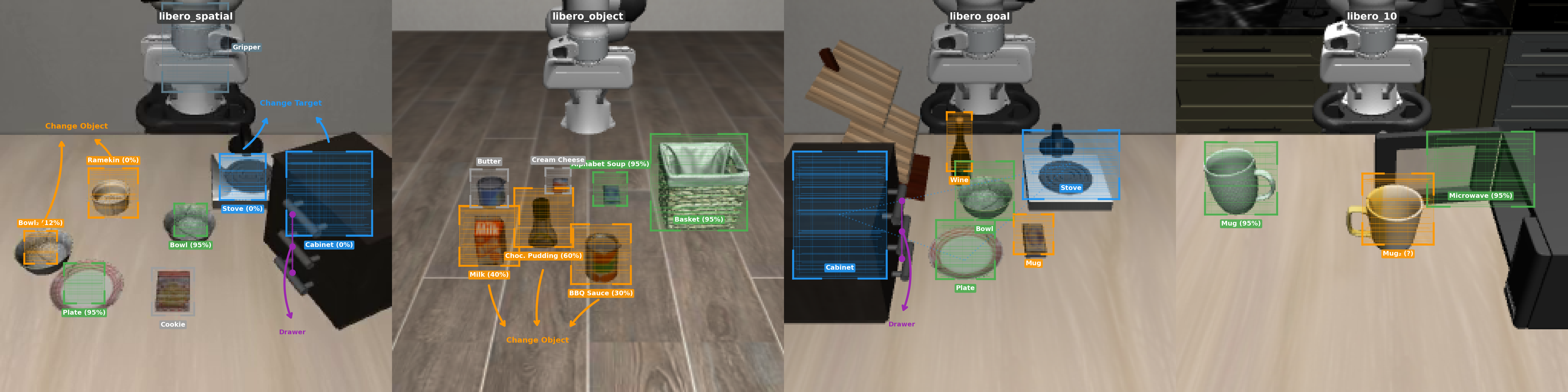

When changing the instruction from “put bowl on plate” → “put bowl on stove” in the same visual scene, the model still executes the original action (goes to plate), achieving 0% success. This reveals that VLAs perform vision-to-action pattern matching rather than genuine language understanding.

We design a four-dimensional semantic perturbation diagnostic — Change Object, Change Target, Spatial Description, and Drawer Action — keeping the visual scene identical and only modifying the instruction:

| Category | Tasks | Episodes | Success Rate |

|---|---|---|---|

| Original (LIBERO) | 40 | 800 | 93.8% |

| Extended (Ours) | 59 | 1,180 | 21.4% |

| Change Object | 38 | 760 | 29.3% |

| Change Target | 13 | 260 | 0.0% |

| Spatial Description | 5 | 100 | 11.0% |

| Drawer Action | 3 | 60 | 31.7% |

3. Design Principles

- Same-Scene Multi-Task — Multiple tasks share identical initial visual states, eliminating visual shortcuts. A model ignoring language achieves at most 1/k success rate (k = tasks per scene).

- Instruction-Level Train/Eval Split — Training tasks do not include all test tasks; held-out evaluation contains unseen language instructions to test compositional generalization.

- Physical Feasibility Validation — All extended tasks verified in the LIBERO simulator to ensure graspability, reachability, and detectability.

4. Data Collection Pipeline

- Scalable & Diverse Generation: We employ a scripted, waypoint-based collection pipeline to efficiently and stably gather 150 successful episodes per task. While the waypoints are hard-coded for each specific task, the simulator introduces slight natural variations in the initial tabletop layouts. This ensures the collected trajectories are visually and dynamically diverse, preventing models from merely memorizing rigid, identical paths.

- Hierarchical Control Architecture: Each task utilizes a custom script that decomposes the pick-and-place process into multiple sequential waypoints. At the high level, we apply pure Proportional (P) control to calculate positional errors and output continuous action commands. These commands are then executed by the simulator’s low-level OSC (Operational Space Control) PD controller, achieving seamless, highly precise continuous control.

5. Results

| Method | Orig. | Ext. | Ch.Obj | Ch.Tgt |

|---|---|---|---|---|

| π0.5 | 93.8% | 21.4% | 29.3% | 0.0% |

| π0 | 48.3% | 8.6% | 10.8% | 0.0% |

| π0-FAST | 47.5% | 2.7% | 3.1% | 2.3% |

| SmolVLA | 38.0% | 6.4% | 7.6% | 0.0% |

| π0.5-Ours (45) | 89.5% | 22.8% | 28.4% | 6.2% |

| π0.5-Ours (56) | 85.5% | 20.4% | 27.5% | 5.0% |

| Config | Eval | Baseline | Ours |

|---|---|---|---|

| Single-task (1 ext) | 1 task | 3.75% | 90.0% |

| 6-task (1+5 ext) | 5 ext | 0.0% | 28.0% |

| 45-task (40+5 ext) | 5 ext | 0.0% | 4.0% |

| 16-task (16 ext) | 16 ext | 26.2% | 6.2% |

| 56-task (40+16 ext) | 16 ext | 26.2% | 27.5% |

6. Long-Term Value

As semantic diversity of tasks increases, model learning capacity proves severely insufficient — even trained tasks perform poorly. This reveals a fundamental challenge that is architecture-agnostic: all tested models (π0.5, π0, π0-FAST, SmolVLA) exhibit the same language gap. LangGap provides a systematic diagnostic tool that remains valuable as new VLA architectures emerge, precisely because the language gap is a persistent problem that current training paradigms have yet to solve.