Overview

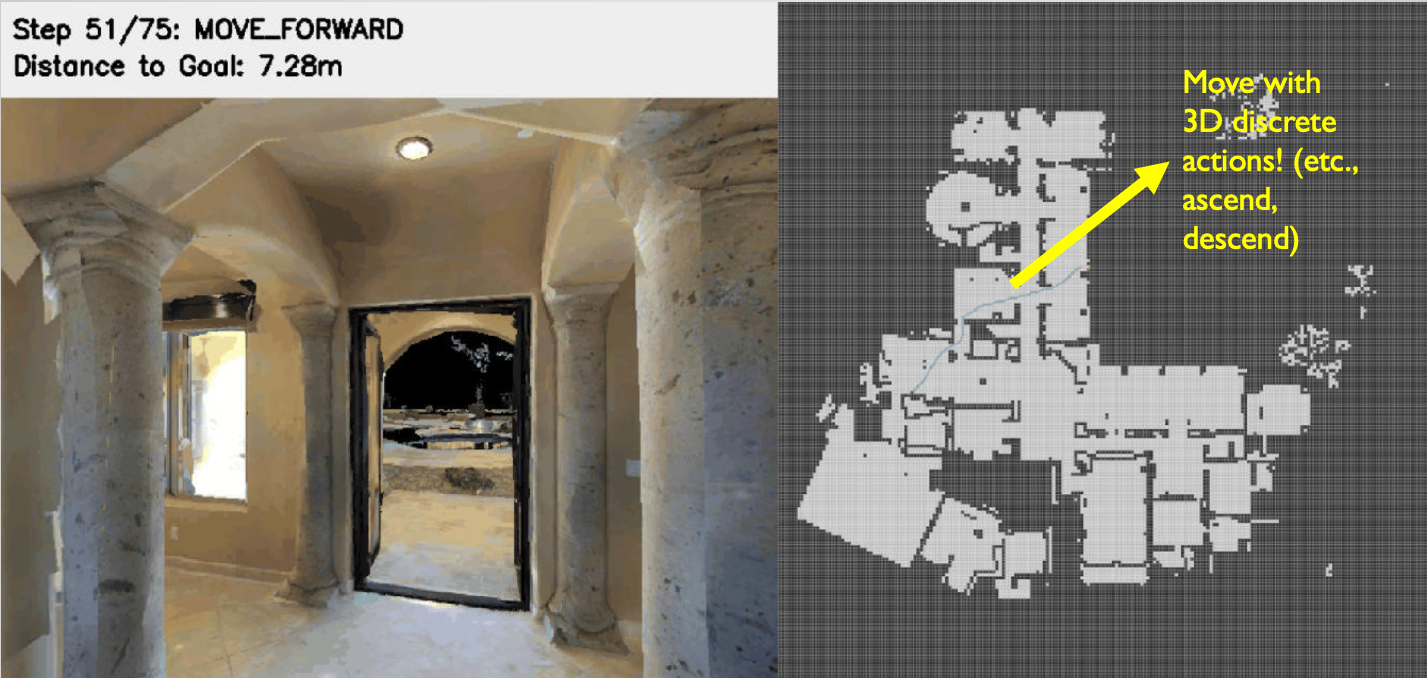

Built a robust pipeline to generate diverse 3D navigation trajectories in the Habitat simulator for training vision-language navigation (VLN) policies on aerial robots.

Simulator: Habitat with 90 indoor scenes.

Pipeline

-

Start-Goal Pair Generation — For each of the 90 scenes, generate 200–300 random 2D start-goal pairs as navigation endpoints.

-

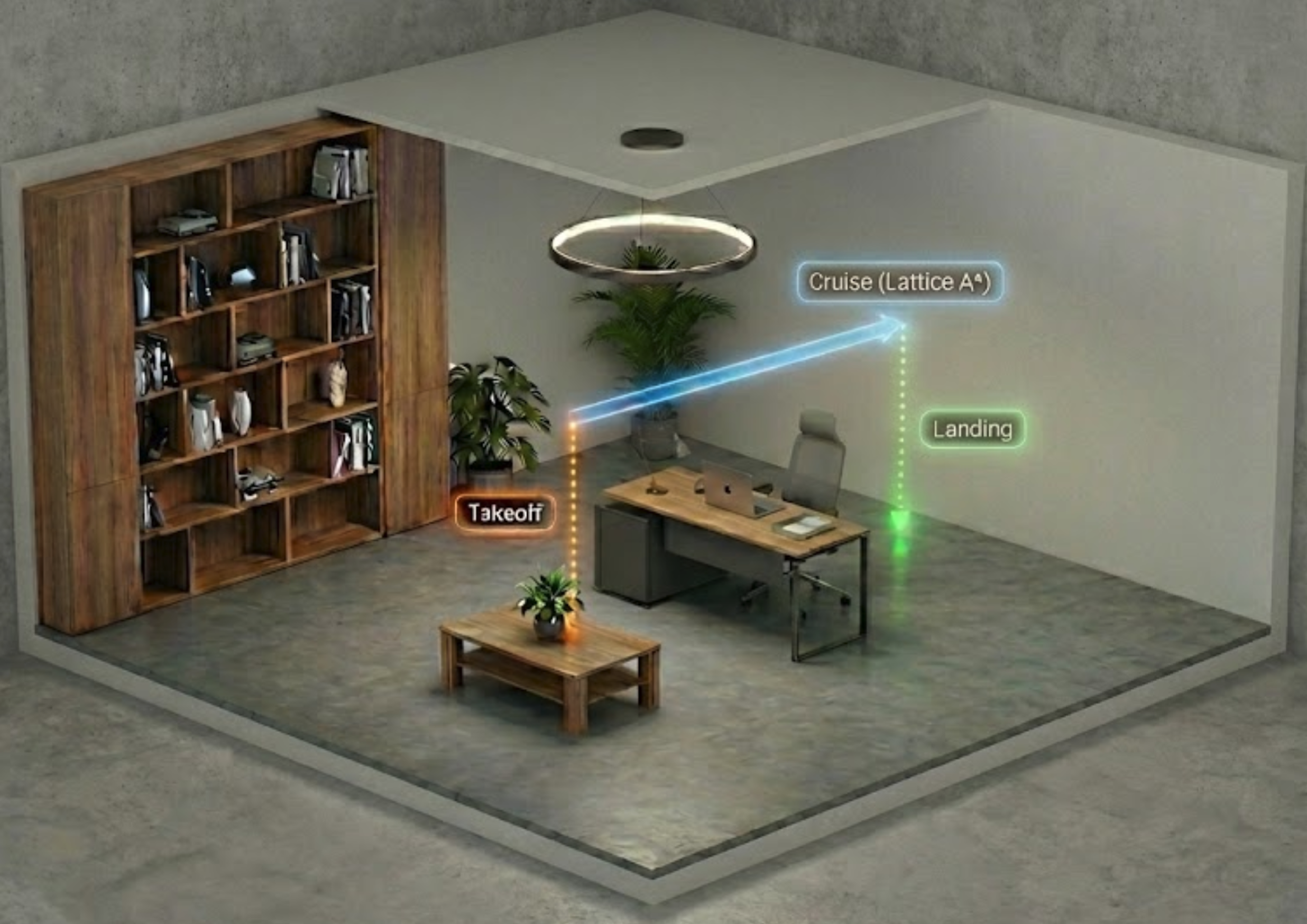

2D Cruise Path Planning — Determine a suitable constant cruising altitude for each scene, then plan a natural 2D path at that altitude using Lattice A*. Unlike standard Grid A* which produces rigid right-angle turns, Lattice A* plans over motion primitives in continuous state space \((x, y, \theta)\), producing smooth paths where the agent turns while moving forward. Heuristic:

\[H(s) = \frac{\|p - p_{goal}\|}{L} + \lambda \cdot |\Delta\theta|\]This penalizes sharp turns to ensure smooth, realistic flight trajectories.

-

3D Trajectory Assembly — Prepend a takeoff segment and append a landing segment to each cruise path, forming a complete 3D trajectory. Collect RGB-D observations along the full path as video.

-

Instruction Generation — Use a video-to-text model to generate natural language navigation instructions from the collected observation videos, producing a complete VLN dataset.